SP4096 Near-Cap Dense

Fixed-step 3090 research winner used to rank ideas before remote validation.

OpenAI's Parameter Golf challenge was a model-compression contest: fit a language model into a 16,000,000 byte artifact, keep record-track training under 10 minutes on 8x H100s, and optimize the final tokenizer-agnostic bits-per-byte score after the required quantized roundtrip.

I treated this like a solo research sprint. That meant building the experimentation loop, ranking ideas honestly, killing dead ends quickly, and then validating the strongest branch on rented H100 hardware. The full code and tooling used for the project lives in the public repository here.

The hard part of this challenge was that model quality was not enough on its own. The actual objective was the post-roundtrip artifact: train a model that still scores well after int8 export and zlib compression, while staying under the size cap and within the wallclock budget.

This was not just model tweaking. I ended up building a small research platform around the challenge so I could move faster without lying to myself.

Stage 1: build a trustworthy local ruler. Early 3090 runs were noisy and easy to misread, so I shifted from a loose wallclock loop to a fixed-step exact-roundtrip evaluation path. That single change improved the research quality more than any one architecture tweak, because it stopped me from ranking ideas on noise.

Stage 2: pressure test ideas instead of falling in love with them. I tried compression-aware training, sidecar eval ideas, shared-block recurrence, sparse attention, ternary shaping, residual-budget tuning, export-side heuristics, and tokenizer changes. Most of the flashy ideas lost once they were measured cleanly.

Stage 3: spend bytes where they actually mattered. The local story changed once I stopped treating a tiny under-cap model as the frontier. Near-cap dense models plus better export discipline mattered more than many of the clever micro-tricks.

Stage 4: validate the branch remotely. I used H100 runs to separate local research signals from ideas that genuinely held up on the published full-data track.

1.89258040 on the retokenized subset.1.19816494, but the artifact exploded to 30.9 MB, making it invalid despite the strong score.Lower bits-per-byte is better. Artifact size must stay under the 16,000,000 byte cap.

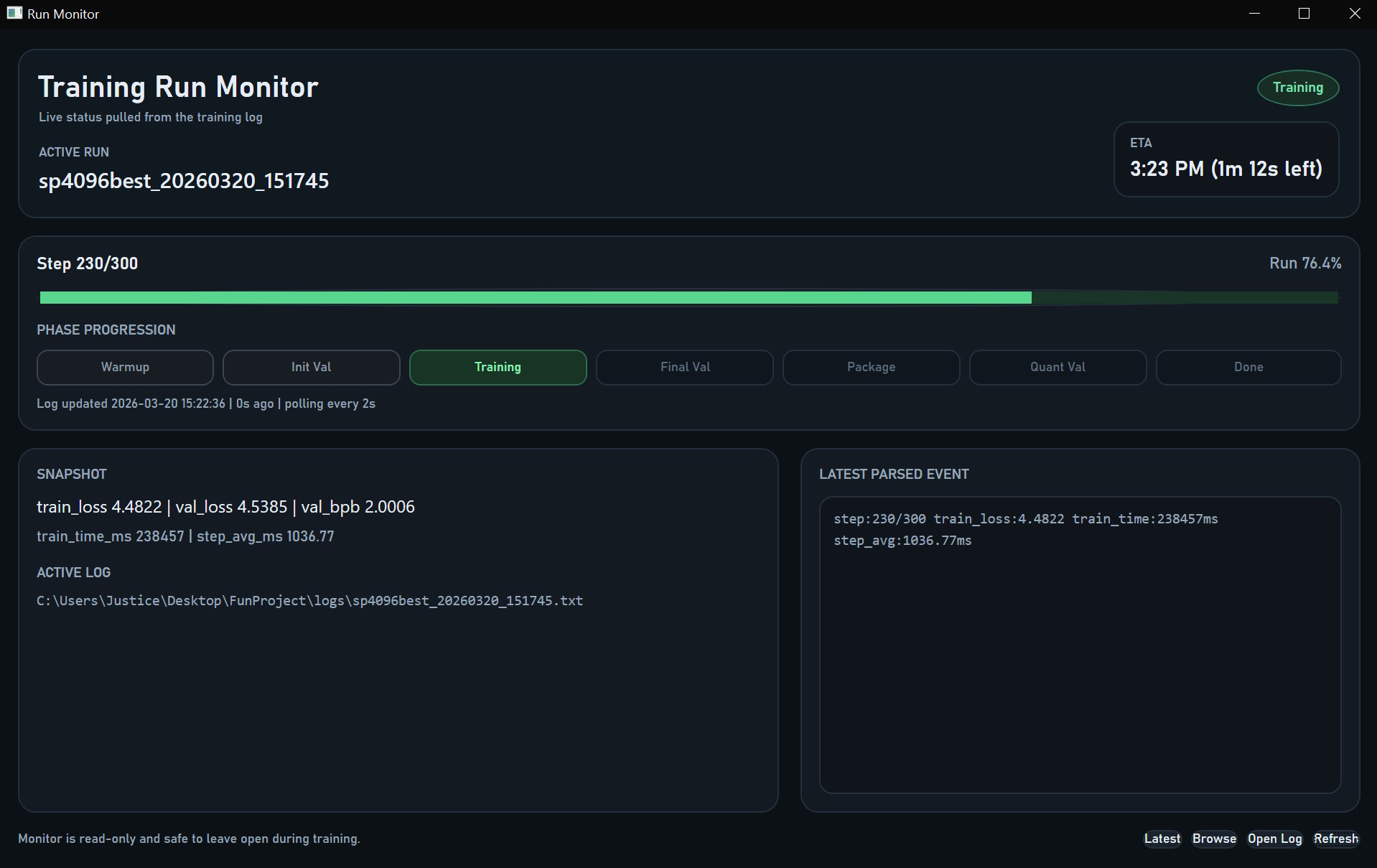

Fixed-step 3090 research winner used to rank ideas before remote validation.

Best recovered full-data H100 run that stayed safely under the artifact cap.

Strongest recovered score overall, but it failed the artifact cap badly.

These numbers mattered because they showed three different things: a strong local research branch, a legal remote control, and a high-quality oversized run that exposed the next bottleneck.

1.89258040 bpb on a near-cap SP4096 branch, useful as a research signal but not directly submission-ready.1.31661720 bpb at 14,912,837 bytes on SP1024 9x512 with targeted residual export.1.19816494 bpb, but invalid because the artifact landed at 30,904,580 bytes.Best measured result from each major branch I explored. Lower bits-per-byte is better.

Best local research branch on the fixed-step trusted track.

Tokenization pivot that made the biggest local frontier jump.

Best earlier local branch before the tokenizer and near-cap pivots.

Interesting early near-win that became unstable under cleaner measurement.

Beat the plain matched baseline, but not the dense compression-aware branch.

Most exciting architectural idea on paper, but clearly not competitive in this tested regime.

This is the core tension of the challenge: score quality mattered, but only if the artifact stayed legal.

This case study shows the kind of work I enjoy most: ambiguous technical constraints, incomplete information, a lot of dead-end space, and the need to build systems around the problem rather than only attack the most obvious surface-level fix.

It combined research thinking, engineering discipline, automation, metrics literacy, and a willingness to throw away ideas that did not survive clean measurement. Even without a final challenge submission, it produced a real body of evidence, tooling, and conclusions that could immediately guide a better-funded next round.

With a larger compute budget, the next move would be to keep the legal full-data SP1024 9x512 branch as the remote control, then reintroduce only the export-side changes that have actually transferred cleanly. The goal would be to convert the oversized 8x H100 quality signal into a cap-legal submission path, instead of starting another wide search from scratch.

This is the working research tracker used during the challenge sprint, rendered directly from the markdown source.

Loading research log...